Muse TECHNOLOGIES

Menu

Music and Language Theories |

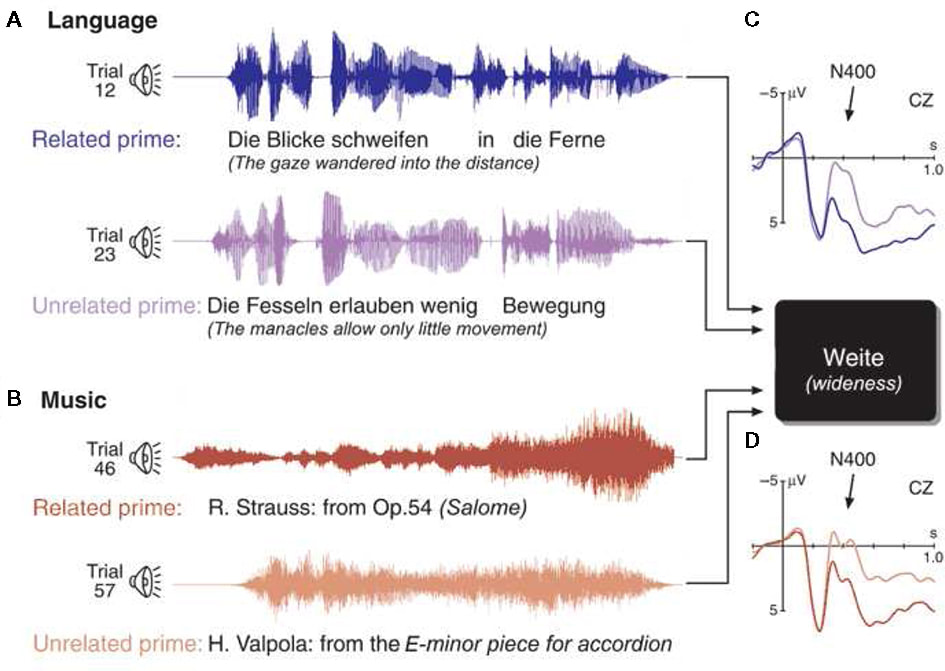

The way in which language is perceived in the brain and the way music is perceived in the brain “overlap in important ways” (Patel 1). Neuroimaging research has supported the case for “synaptic overlap by showing that musical syntactic processing activates ‘language’ areas of the brain” (Patel 1). This information was gained by recording what occured when musicians listened to sentences and musical chord sequences and the same parts of the brain were being used for each. The neuroimaging proves why music increases one’s language and speech abilities as stated in the Memory, Focus, and IQ webpage.

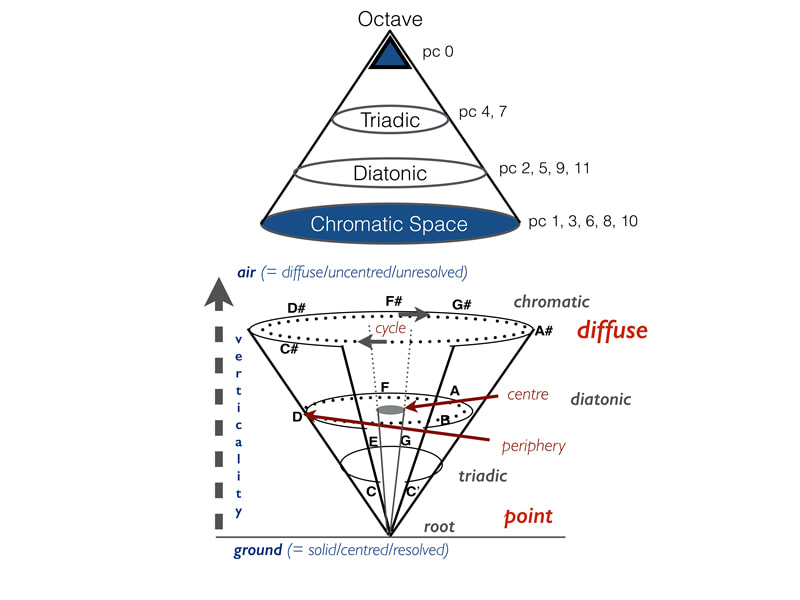

While music and language have been concluded to be perceived in the same regions of the brain, it has been concluded that “the mental representations of linguistic and mental syntax are quite different” (Patel 2). The perception of language and music have been split into two leading theories. The first being Gibson’s Dependency Locality Theory of language processing which states “linguistic sentence comprehension involves two distinct components” (Patel 2).The first component is structural storage which keeps track of sentences in time. The second component is structural integration which connects the words of a sentence based upon its structure. The theory for musical perception is called “Lerdahl’s Tonal Pitch Space theory, which states that in music “there is a hierarchical structure of importance such that some pitch classes are perceived as more stable and central than others” (Patel 5). For example, in the key C major, C (the root) is the most stable, followed by G (the fifth), then E (the third). Lerdahl’s theory actually “provides an algebraic model for qualifying the tonal distance between and two musical chords in a sequence” (Patel 5). This explains the main difference between the perception of language and music because music perception is not in a sequential matter, rather a hierarchical matter, while language perception does follow a sequential matter.